Enterprises believe that their AI is working perfectly

They have created sophisticated harnesses

They have run tests and evals

They have red-teamed it to identify security gaps

They have connected it to observability tools

But AI still fails in production

How Realm Labs stops AI Failures

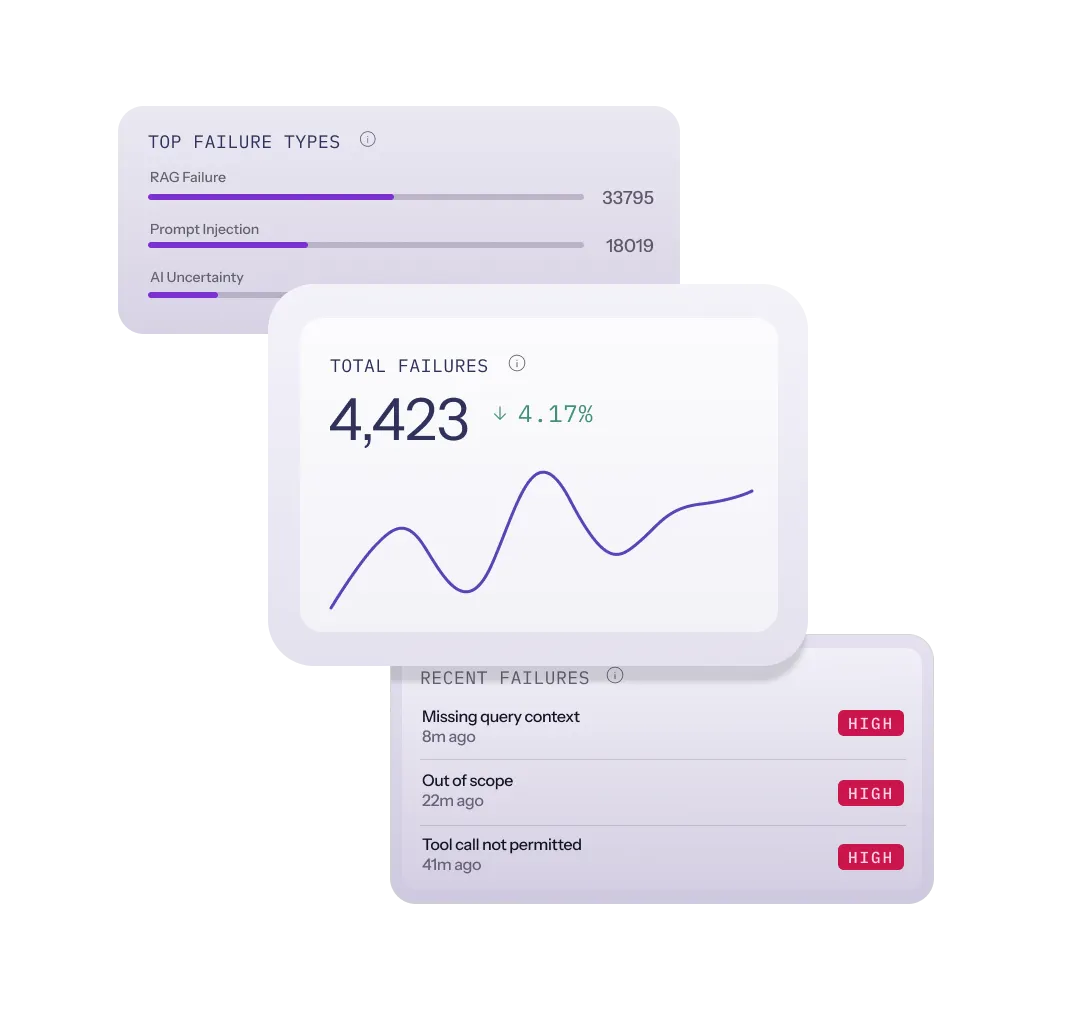

Detect

See the failures everyone else misses.

Realm Labs watches every AI interaction as it happens and surfaces the ones that go off the rails. Hallucinations, off-goal answers, leaked data, unsafe behavior.

Respond

Stop failures from causing harm.

The moment an interaction crosses the line you set, Realm steps in. Block it, rewrite it, route it, log it. Your AI keeps shipping. The failure doesn't.

Improve

Turn every failure into a fix.

Every caught failure becomes a lesson your AI carries forward. Patterns surface. Drift gets corrected. The same incident doesn't happen twice.